God is a baker

He does not play dice

Making dough is one of the most mathematical things you can do in the kitchen, far beyond measuring out ingredients. As you fold and compress dough you are carrying out topological deformations. The act of kneading constitutes an operation that has been canonized in chaos theory as the Baker’s Map, in which the mixing of a dynamical system arises from a map of that system onto itself and carried out many times over.

Mixing is a chaotic but not a random act. It occurs because of nonlinear self-interactions in a system that cause the system to reshape itself so completely that, over enough iterations, every possible configuration of the system’s matter will be reached. This is critical to an area of mathematical physics called ergodic theory —the theory that underlies much of statistical physics.

This is not without constraints of course. The atoms won’t experience nuclear fusion or spread out to distant lightyears unless that is part of how you defined the system. Yet, mixing will ensure that something like dough will not only reach its maximal entropy but the total huge collection of molecules in the dough will go through every possible permutation given enough time. And, even if not given enough time, they will statistically appear as if they were.

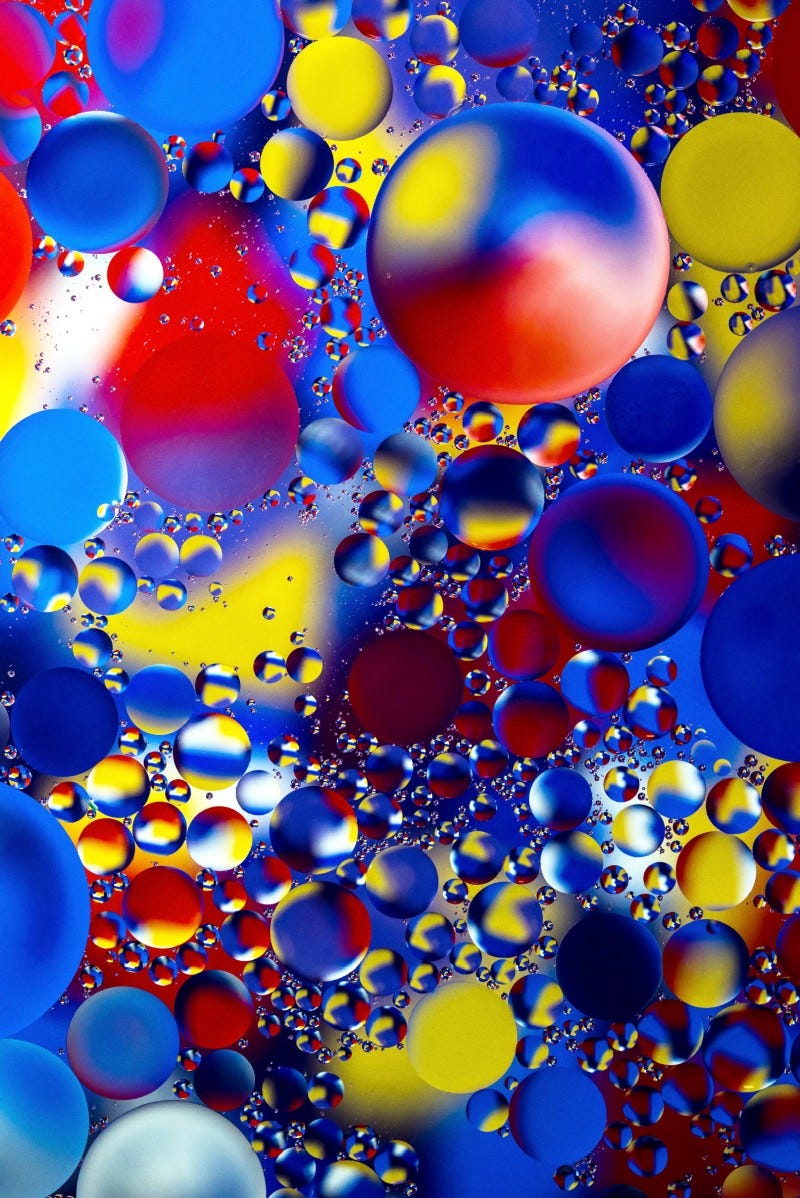

In our universe, mixing is one of the most fundamental operations, far more than randomness which, at its core, is the result of mixing at many different length scales. For example, the length scale of the size of the cut to be made as in this picture determines the largest scale. And the mixing is pushed down from the top length scale down to smaller ones by the squashing, so that all length scales are ultimately affected

What does that mean?

The basic idea is that a Baker’s map, carried out an infinite number of times in a chain (see Gaspard), has no predictability to it at all and all components are shuffled so completely as to be random.

Stochastic systems occur when you add a random process like this to a deterministic process, like an oscillating spring or a ball rolling down a hill. The deterministic process provides the overall motion, while the randomness gives a statistical distribution around that motion. Randomness dials up or down in stochastic systems depending on the length scale involved, but most systems have some stochastic behavior.

This relationship between chaotic mixing and randomness is fundamental to all of statistical physics, but I argue that chaotic mixing is actually more fundamental. The reason is because we know that at least for classical systems like gases molecules are fundamental units. Thus, chaotic mixing does not occur below the level of molecules. Rather, molecules have thermal behavior that results from a combination of kinetic energy and bumping into other molecules. And there is good agreement between models of molecules (at least small ones like gas molecules) as essentially billiard balls and experimental measurements.

The interaction of two (or more) molecules at this small scale is deterministic and chaotic, not random. Thus, features that we associate with randomness arise from deterministic chaos, not true randomness.

Another interesting feature of statistical physics is that randomness is not a critical aspect of making predictions. It is commonly used in simulations and unpredictable thermal fluctuations are certainly observed, but statistical predictions would often work just as well if the fundamental constituents were not random, but simply mixed well. It is the mixing that matters because the mixing causes elements to end up in the right configurations for the right amounts of time.

Take something like temperature or pressure, which are the result of statistical processes. What matters for temperature is that molecules impart the right amount of energy in the right places to reflect an average amount of kinetic energy per molecule. Moreover, as I mentioned before, this mixing has to be fractal in nature. You need to see mixing happening at all levels so that, no matter how small and fast your thermometer is you will still see the same average temperature. But this ends when you get down to the size of molecules. If your thermometer is on the scale of individual gas particles, you won’t measure it correctly.

Thus, models that pretend that these particles have a temperature because they are random are only doing so because they can safely ignore the fact that there is a smallest length scale. They assume that you aren’t measuring temperature with a tiny thermometer!

Why does this matter?

It matters because I believe that the universe is not fundamentally random. God does not play dice. Rather, God is a baker who bakes all the way from the scale of the universe down to the Planck length. Smaller than that and the baking stops. Even when it comes to quantum phenomena, it is all just baking, but in one additional dimension, with space and time itself being kneaded.

If that is so, then randomness is an illusion and models of randomness are approximations of mixing.

In the 1960 and ‘70’s, Nobel laureate Kenneth Wilson, who is perhaps one of the greatest unsung heroes of both statistical physics and quantum field theory, developed a theory about length scales and showed us how to model physical systems at particular length scales and relate those scales to one another. From Wilson’s legacy we get the modern understanding of how stochastic systems and, in particular, state changes like water freezing or evaporating, or magnets magnetizing or demagnetizing.

It is from this that we can understand why physical systems mix at not just one length scale but all length scales down to some fundamentally small “atom” or size be it a molecule or the Planck length. It is because of the “self-similarity” of such systems at different length scales that they behave similarly at different scales but with stronger or weaker self-interactions, which may make these systems mix more or less strongly at one scale versus another.

Some systems, for example, are smooth and well-behaved at large scales but frothy and turbulent at small ones. Others are exactly the opposite, being complex and chaotic at long distances and times but simple and easy to predict at small. (You can think of cellular automata.)

Take something like air.

Air behaves extremely differently at large scales than at small which is why airplanes can cut through the air with large fixed wings while flies have to buzz through eddies and currents that an airplane would just ignore. This has a lot to do with the air’s viscosity, which is sometimes related to the random motion of the air but could also be called the degree to and speed at which the air mixes at that scale.

The stock market is another example that is easier to predict at long time intervals than short. That’s why you can invest in an index fund and leave your money for 10 years and be fairly confident you will make money. The average trajectory is upward.

It is true however that the chaos at one scale can also propagate to another. This is where we get the so-called butterfly effect. Wilson showed, in particularly, that it was foolish to try to isolate length scales from one another. Rather, we have to relate them to one another and deal with the possibility that the phenomenon we are interested in studying depends on what’s going on at all length scales, not just one. In particular, when a system is near what is called a “critical point” like ice melting or a magnet magnetizing, all the length scales will come together to create that phenomenon, causing cascades that work their way up and down the scales so that something amazing happens and the system is transformed.

Likewise in finance, small changes can influence larger trends and vice versa so that you arrive at a critical point where the market crashes or booms. You can never be certain that what is going on second to second isn’t going to break out and affect what happens month to month.

None of this presupposes that randomness does or does not exist. Rather, it only assumes that mixing (random or not) occurs at many different scales at different speeds. Randomness is certainly convenient to mathematicians and physicists but not required.

One of the benefits of assuming systems are random is that it lets you sweep all the external factors you don’t understand under the rug. But as a fundamental model of how the world works, randomness is untenable. Random models always have to rely on some mysterious source of entropy. They don’t allow a system to be completely closed and deterministic. Another feature of randomness is that it destroys some of the nice mathematical properties of systems, like differentiability.

If you have a particle that is fluctuating randomly according to some normal distribution, it turns out that its motion is not differentiable. The reason is because over some tiny scale of time, it will on average move by a distance proportional to the square root of that time. Derivatives rely on infinitesimal times and distances being proportional so the tiny amounts in them cancel out in the ratio. Not so with randomness. Unless the randomness magically vanishes at some length scale, you are stuck with non-differentiable motion. This is why we have the whole field of stochastic calculus.

It is also the reason why quantum mechanics has non-commutative operators, operators that give a different answer depending on the order in which you apply them. And that is where Heisenberg’s uncertainty principle comes from. Directly from randomness.

Wouldn’t it be much simpler if we said systems are never stochastic, they are simply being mixed because of internal nonlinear self-interactions or unaccounted for interactions with external forces?

Maybe, maybe not. We have the whole theory of distributions to deal with randomness. We have operator calculus to deal with randomness. We don’t need to replace it with complex, multi-scale nonlinear mixing operations just to get things like differentiability. Do we?

Maybe not if we only want to make predictions. But what if we actually want to understand what’s going on under the hood?

We can double down on randomness or we can question it and try to understand where its limits are.

I’m thinking in particular of the interest in Lindblad equations to deal with quantum mechanics. These equations codify randomness into quantum mechanics in order to explain why quantum measurements appear random. Given that quantum predictions work just fine without them, why introduce them unless you are trying to say that God does indeed play dice?

What if God is a baker instead?

Leonardo Ermann and Marcos Saraceno (2012) Quantized baker map. Scholarpedia, 7(12):9860.

Gaspard, Pierre. “Diffusion, effusion, and chaotic scattering: An exactly solvable Liouvillian dynamics.” Journal of statistical physics 68.5 (1992): 673–747.

Gilbert, Thomas, and Jonathan Robert Dorfman. “Entropy production in a persistent random walk.” Physica A: Statistical Mechanics and its Applications 282.3–4 (2000): 427–449.

Wilson, Kenneth G. “The renormalization group and critical phenomena.” Reviews of Modern Physics 55.3 (1983): 583.