Understand any scientific theory using first principles

Popular science is a young subject. The first acknowledged popular science book, On the Connexion of the Physical Sciences by Mary…

Popular science is a young subject. The first acknowledged popular science book, On the Connexion of the Physical Sciences by Mary Somerville, was published in 1834. That means that books on science, written for mass consumption, are even younger than the first science fiction books, the first being Frankenstein by Mary Shelley, published in 1818. (I can’t help but notice, in a field that is currently male dominated, that female hands penned both these firsts 200 years ago.)

These days you can take your pick of topics to read. Just taking a look at the bestsellers right now we have a book on Rationality from Steven Pinker, Helgoland on quantum mechanics from Carlo Rovelli, and The God Equation on string theory from Michio Kaku. These are all well-known scientists and popular authors with impeccable scientific credentials and long histories on the public stage.

Meanwhile, Neil deGrasse Tyson’s insanely popular little book, Astrophysics for People in a Hurry, sold over 1 million copies and spent over a year in the top five, despite being a collection of previously published essays, some dating back to the 1990s!

Big names. Big science.

In addition to that we have the regular stream of science news and features from a variety of authors, both well-known and less so, from the more sensational Popular Science, Discover, and New Scientist to the more literary Quanta, Aeon, and Nautilus.

Popular science is a good thing, no doubt about it, but there is a pernicious tendency for scientists and science writers to use it in order to peddle their own pet theories and speculations.

And sometimes articles get things wrong, such as when one science article suggested that quantum entanglement would allow faster-than-light communication. Maybe in Orson Scott Card’s Enderverse, but not in the real world.

Even so, much that appears in science writing is still being debated in scientific circles. That’s partly why it’s interesting. Questions about grand unification theory, string theory, what happened before the Big Bang, inflation, black hole information, or what quantum mechanics means, are still unanswered, but, if you go to popular science venues, free from persnickety peer reviewers and rebuttals that occur in academic science journals, you will find vehement support for a variety of competing theories, many presented as being the “only” answer.

Philosophical thought experiments, like the hypothesis that we are all living in a computer simulation, are presented as potential truths.

Questions about consciousness and how that relates to quantum physics and materialism, likewise, are presented as being far more certain than they are.

In my own science writing, I tend to use the word “may” a lot in my titles to indicate that the topic is not certain. Many science writers seem allergic to this word and present their opinions as fact. Even if they are not so certain about their own ideas, they may be entirely too certain that others are completely wrong.

In the best tradition of critical thinking in science, I have developed over the years a finely tuned science BS detector. I use this detector not only for pseudoscience and bad science but also bad popular science.

This BS detector is not based on received wisdom nor does it cleave to the authority of those speaking or writing. Even the blessing of peer review isn’t sufficient for it.

No, it is based on first principles, basic tenets of scientific thinking that are applicable to any claim a scientist or journalist can make.

What are First Principles?

If you Google first principles thinking, you will get a number of articles about Elon Musk’s supposed approach to problem solving.

I don’t know how Musk solves problems. He’s certainly innovative but based on some of his public statements, I’m not sure he uses first principles. In a well known story, he motivated his starting Space-X:

Physics teaches you to reason from first principles rather than by analogy. So I said, okay, let’s look at the first principles. What is a rocket made of? Aerospace-grade aluminum alloys, plus some titanium, copper, and carbon fiber. Then I asked, what is the value of those materials on the commodity market? It turned out that the materials cost of a rocket was around two percent of the typical price.

This story is not only a poor example of first principles thinking, it is illogical. Would you, if you wanted to start, say, a package delivery service and could not afford a cargo jet, start your own aircraft company because the raw materials cost less?

I’m glad that he started Space-X but clearly he had other motivations than a dubious back of the envelope calculation.

The Three First Principles of Science

Aristotle defined first principles as self evident truths. These were principles that could not themselves be deduced and were hence “first” in a line of reasoning.

Because reasoning can derive from a variety of initial premises, there is no well-defined set of them, but we can say something about what they look like.

An example of a first principle is “all men are mortal”.

If we add the data that “Socrates is a man”, we derive the conclusion “Socrates is mortal”.

Thus, first principles provide logical relationships that allow us to deduce conclusions from data.

This approach works well in philosophy. By developing self evident principles about what is real (metaphysics), what we can know (epistemology), right and wrong (ethics), and beauty (aesthetics), through the application of logic, we can develop self-consistent attitudes to the universe.

In science, however, we don’t deduce laws of nature from evidence. We infer them. In other words, the basic laws of nature as best we know them take the place of the self-evident truths in philosophy and the evidence follows from them logically. We do this by deriving models from laws and showing they match the evidence.

What this means is that in science first principles are the basic laws of nature that we have inferred from evidence rather than deduced from other laws.

As in philosophy, it is perfectly acceptable to question first principles, and, unlike in philosophy, in science you can also look for evidence against them.

The classic example is the toy statement “all swans are white”. The moment you find a black swan this statement is proved false. So the goal of science is, in this case, for theorists to understand what new principle would explain a black swan and for experimentalists to look for them.

Wrong science happens when scientists come up with theories that rule out white swans to explain black ones.

Worse science happens when theories predict a rainbow of swans that are never seen to predict the black and white ones. These theories are “not even wrong.” They are useless.

Ockham’s Razor

This leads to our first first principle, and it isn’t even a law of nature. Rather it is a self-evident philosophical truth sometimes called Ockham’s razor.

If you don’t have any other principle in your toolbox, at least have this one.

The simplest theory that explains all the evidence is best.

Note that it doesn’t say that the theory is “true”. It only says it is the best one for now. Better theories might come along but only with new evidence that the old theory can’t explain.

Falsification

Ockham’s razor helps us identify the best from a group of competing theories, but how do we know if theories are wrong?

We need another first principle here.

In order for a theory to be considered, it must be “falsifiable”.

Evidence must be possible that can prove the theory false, like the black swan, and a responsible theorist should always suggest reasonable tests of their theory. Theories that cannot be proved false with evidence may still be philosophically valid but cannot be admitted to the halls of science.

For example, most quantum interpretation theories like Copenhagen, Many Worlds, and so on are not falsifiable and so rightly belong to philosophy — metaphysics in particular. You can still argue about them, but you can’t falsify them.

Theories that have a lot of free parameters (tunable variables) are particularly troublesome here because their proponents can always suggest that evidence for the theory exists somewhere nobody’s looked ad infinitum.

For example, I propose a theory that predicts white, black, and purple swans, but I can put the purple ones wherever I want. If they aren’t in Australia, then they are at the bottom of the ocean, not there, then they are on Mars.

Unfortunately, this is a classic tactic of the charlatan, but quite a few scientists have tricked themselves into going down this dark path by saying that confirmation of their ideas is “just around the corner”.

These theories fail the falsifiability test because they have too many free parameters to be proved false.

Theory Replacement

Once you have a theory that passes these two tests, you need a third one to decide what to do with it.

The third principle is this:

A scientific theory may replace a previously accepted theory if it passes the other two tests and the one(s) it is replacing does not.

This principle points to the iterative nature of science as a human institution and the strict criteria for advancing the state of human knowledge in science. Scientists who are itching to replace existing theories with their own must meet these criteria first.

An example is when Einstein’s General Relativity (GR) replaced Newton’s law of gravitation because GR could explain the anomalous precession of the orbit of Mercury and light bending around the Sun, while Newton’s could not.

Virtually every scientific claim you will encounter fails one of these tests unless it is derived from existing first principles. A replacement of physical law is extremely rare and often takes decades of flurried activity to sort out.

A potential replacement is happening now with Dark Matter and Dark Energy. Right now the accepted theory is the Lambda-Cold Dark Matter theory, which is unsatisfying but undeniably simple. Lambda stands for a cosmological constant, a fixed density of Dark Energy with no explanation. Cold Dark Matter, meanwhile, means unexplained dark matter that behaves, gravitationally, like ordinary sublight matter and not like “hot” dark matter that travels at lightspeed.

Many potential replacements and elaborations exist, but none have passed Ockham’s and some, like the original Tensor-Vector-Scalar theory and Brans-Dicke theory, have been falsified.

Ultimately, what we need is more data to answer basic questions: what are they? Particles and what kind? A new state of matter and which one? A previously unknown force? Answering these questions will falsify whole classes of theories and enable us to move forward.

Physics and Symmetry Principles

First principles in the physical laws of motion and forces have gone through several iterations over the past several centuries. Kepler’s laws, for example, describe the approximate motion of the planets around the Sun. Sir Isaac Newton’s universal law of gravitation, however, replaced Kepler’s laws, showing that Kepler’s were actually derived from a force called gravity and were not first principles.

Newton’s law not only explained the motion of one planet around a star, as Kepler’s did, but explained the motions of many gravitating bodies all interacting with one another as well. While the real orbits of the planets did not match Kepler’s exactly because of the various tugs that all the planets have on one another, they were almost a perfect match for Newton’s. Explaining what caused objects to fall made the theory even better.

Later, Newton’s 17th century laws of motion, which are first principles based on the concept of forces, gave way to the principle of least action in the 18th century and still later in the 19th to Hamilton’s laws that described motion in terms of a general concept of energy.

Still later, in the 20th century, Emmy Noether showed that all physical laws obey symmetries, like rotation, translation, and time translation and that all these symmetries lead to conservation laws like angular momentum, momentum, and energy.

And this is where physics stands today. Every physical law has a description, given by an expression for a quantity called its action. Those action expressions contain symmetries. Based on the principle of least action, those symmetries translate into conservation laws.

The general principle of physics, based on Ockham’s and Noether, therefore, goes like this:

A description of a physical law is the simplest action expression that contains the symmetries of that law.

This is partly why symmetries are such a huge interest in physics. Not only do the four forces obey them, but all physical laws must obey them. Any violation of a symmetry is of major interest to science because it often indicates a transition into new physics.

Quantum Physics and the Principle of Delocalization

Much of the details of physics, whether classical or quantum, relates to how the fundamental variables of the laws are described, which indicates how they interact with symmetry laws.

In classical physics we have definite values that relate to what we measure: energy, momentum, position, and classical fields like fluid velocity fields and electromagnetic fields. In quantum physics, we have non-definite, smeared out values that are “delocalized” and only appear definite when they interact with something else like a measuring device. This feature is what distinguishes quantum physics from classical and so we have the quantum first principle.

Everything observable can be delocalized.

Delocalization does not apply only to location, but anything. An example of delocalization is the Schrödinger’s cat state where a quantum particle interacts with a vial of poison to put a cat into a delocalized state of life and death. Some commentators say that the cat is both dead and alive. That isn’t quite true. The cat’s state is delocalized across death and life. It has neither state until it interacts with something and then has only one.

Different quantum physics descriptions either attribute the delocalization to a mysterious object called the wavefunction, which is, itself, never seen, as in Schrödinger’s mechanics, or to a more general concept of physical attributes as in Heisenberg’s mechanics which makes no use of the wavefunction. This is why the wavefunction, although popularized as a real and central part of quantum theory, is not a first principle since we don’t know if it is real or a computational tool.

Delocalization, on the other hand, is very much real. Any alternative theory of quantum physics has to account for delocalization somehow because it has been repeatedly observed.

One of the odd features of delocalization is when measurement is applied to a delocalized quantum object it becomes localized through a process called decoherence. This process does not act like a force, however, in that it doesn’t propagate through space and time. It just happens all at once. Einstein called this “spooky action at a distance”.

Consider an example: when symmetry causes two quantum particles to have related attributes, they are called entangled. They share a delocalized description that stretches across time and space. When one is measured, the whole pair becomes localized.

It can appear as if the information from the measurement of one particle is transmitted faster than light to the other particle simply because one particle being measured localizes both to some degree. There are various ways to account for this, some involve faster than light communication and some don’t, but few explanations meet the falsifiability criteria of being real scientific theories. We just don’t know how it happens.

Any alternative theory of quantum physics has to account for this feature of localization as well.

Principles of Quantum Gravity

Theoretically, quantum gravity should be as simple as applying the principle of delocalization to Einstein’s general relativity. Unfortunately, this process, called quantization, runs into problems almost immediately. These problems can be described as mathematical, but they are really physical. Delocalization means that you have arbitrary gravitational fields, including extremely intense, energetic ones at small scales creating a “quantum foam”, a frothy, energetic substrate to the apparently placid cosmos. GR, however, breaks down as the scale goes to zero.

This suggests that, at small scales, gravity behaves differently than it does at large scales. We can’t measure gravity at small scales, so we don’t know what that behavior is, but it has to be something.

A few have argued that there is a “smallest” scale to the universe, perhaps the “Planck” scale, and physics just “stops” there. Such an hypothesis is possible but requires an explanation of exactly how and where it stops and how to test that.

Others have argued that General Relativity is an “effective” theory at large scales that becomes a completely different theory at small scales. (This is true of the weak force that causes radioactive decay.) There, it combines with the other three forces to form a single force and possibly combines with matter as well. This is the route Grand Unified Theories take including string theory.

Some argue that we just haven’t figured out how to apply quantization to gravity correctly because other forces and matter are easier. This route is known as asymptotic safety. My five dimensional theory of quantum general relativity takes this route.

Without some precise measurements of gravity at small scales, the likelihood of having a real theory of quantum gravity is small. The parameter space for different theories is too large to guess with so little data. Perhaps we’ll get lucky and a theory will come along that predicts the universe as we see it, dark matter, energy, and cosmological features like flatness and homogenization with only a few free parameters. So far, no luck.

How to come up with new theories

You may ask how people come up with new principles and theories. In some cases, it comes from looking at data and trying your best to model what is happening. These are “empirical” laws. Empirical laws are useful but not satisfying. They give you the what but not the why.

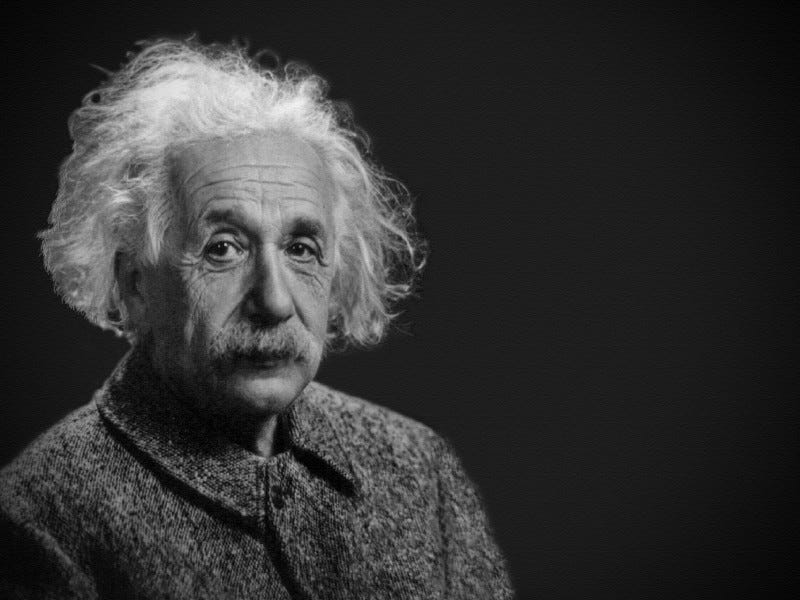

In other cases, you come up with some self-evident principles that you think apply universally and then try to deduce real phenomena from those. If it matches what you see, then you may have an elegant new theory. This is the first principles approach. Albert Einstein was a master and would develop his self-evident principles from thought experiments about matter, light, energy, and motion.

Some question whether Einstein’s approach is possible today because we live in a quantum world where reality doesn’t make a lot of intuitive sense. I may be wrong, but I think the human mind is more flexible than that. Einstein developed many of his intuitions by studying physics of his day, and we can do the same. We may only be a few thought experiments away from a new theory of gravity or something, perhaps, even stranger than that.

If you want to read more about first principles in science, check out my book: The Infinite Universe: A First Principles Guide on Amazon.