Conscious AI may be within reach

New insights into the human brain bring the dream of intelligent machines within reach.

From Skynet to Star Trek’s Data, we are fascinated and a little bit scared of machine consciousness. Before we start figuring out how to burn Asimov’s three laws of robotics into positronic brains, however, we need to figure out what it means for ourselves to be consciousness.

A theory called the Global Workspace Theory (GWT), now about 30 years old, has been gaining ground in cognitive neuroscience as the best theory of consciousness so far. What’s more AI researchers are starting to pay attention, beginning what may be the race to build a conscious machine.

Before we get into GWT, though, let’s look at how people have thought about consciousness in the past.

Up until the 17th century, people assumed that the gods or God conferred consciousness unto inanimate matter. From Genesis 2,

The Lord God formed the man from the soil of the ground and breathed into his nostrils the breath of life, and the man became a living being.

From Norse stories about Odin to Australian aboriginal myths about Yhi, the gift of life was synonymous with consciousness and came from divine power, not from anything specifically innate to us. It could be given and taken at will. Only the Greek philosopher Plato suggested that human beings might have some kind of immortal soul.

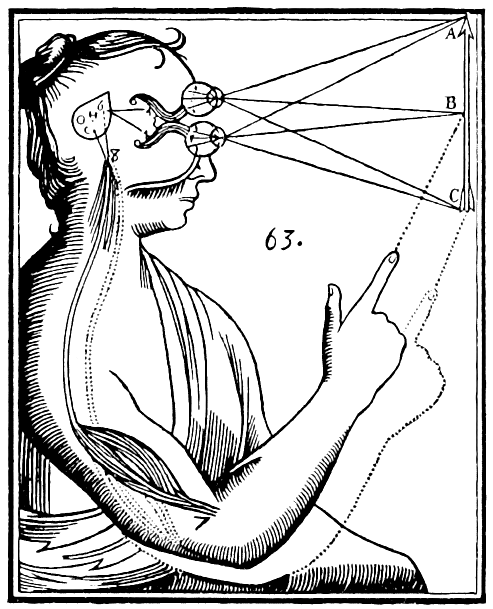

In the 17th century, Descartes proposed his theory of dualism. He wrote that human beings possessed a non-corporeal mind which was separate from the body. The mind and the immortal soul were treated as a single entity that would live on after the body had died.

His idea is illustrated in his drawing below. When a person sees an object, he theorized, it is passed on to the pineal gland from where it goes into the immaterial soul. (We now know the pineal gland regulates sleep cycles and circadian rhythms.)

William James, one of the 19th century founders of the field of psychology, later coined the term “stream of consciousness”. By stream he meant that a person always held on to their own consciousness. Even after a period of unconsciousness, such as sleeping, you still retain only the consciousness of your own life and history. You consciousness is somehow attached to you and your body. He did not mean, however, that consciousness was in any way smooth, like a flowing river. He pointed out that consciousness, while bound to a single individual, was more like a chain of events and experiences and that somehow this depended on a person’s attention.

One hundred years later, we know far more about how the brain works but still can’t point to the seat of consciousness. Scientists believe, however, that the best way forward is to assume it is built into a vast array of neuronal structures, as it must be to be able to access so much mental activity.

The cognitive science of consciousness begins with the assumption that people have consciousness because they refer to their own consciousness all the time. People say, “I suddenly realized I loved her” or “I knew he had my keys, so I went to his office”. Statements like “realized” and “knew” refer to consciousness awareness of information in the brain.

Our vocabulary betrays our consciousness when we refer to believing, pretending, and knowing.

From a scientific point of view, we have to take these statements seriously. As long as the researchers aren’t just making up statements out of their own desire to believe in consciousness, they can be taken as data.

And we can ask questions about these statements such as what brain states are associated with them and can conscious states be reduced to neuronal activity patterns?

One of the problems with studying consciousness is that it differs from the study of basic actions. Consciousness is internal and subjective unlike, say, moving my arm, which has a clear neuronal activity pattern. I can take a behaviorist view to motor actions — neural activity leads to motion of the arm. If the subject were completely comatose, it would make no difference.

Consciousness is introspective, however, so we have to depart from behaviorism and treat statements and reports of conscious experience as primary data. That doesn’t confer any sort of magic to introspections. When people have their corpus callosum severed, they often come up with erroneous explanations for why their right half is seemingly behaving independently. Likewise, people have hallucinations and delusions. In the case of hallucinations, people are seeing things that aren’t there like floating heads. Despite the incorrect neural activation causing them, they are genuine, conscious experiences.

For decades, the argument about consciousness has largely been between dualists and behaviorists. In both cases, consciousness was treated as being beyond scientific study. Dualism has even led to a search for consciousness outside of classical physics, such as Penrose’s quantum entanglement theory.

Chalmer’s zombie paradox, meanwhile, proposes that you can copy a person’s brain neuron for neuron and have an unconscious zombie that behaved in exactly the same way as a conscious person.

You can believe what you want about the zombie paradox, but you can’t say why or why not with any certainty yet. The “hard” problem of consciousness, sometimes called phenomenological consciousness of why we are aware of our minds at all, is a long way from being understood. Yet, we can understand, through cognitive neuroscience, the easier problem of how consciousness works as a mechanism, i.e., how do we become conscious of some things and not others?

Figuring out how consciousness works turns out to be partly a process of elimination. In other words, we have to know how unconsciousness works.

A study of patients with brain lesions (damage) preventing them from being aware of a particular area of their visual field showed that a whole lot of complex tasks can be done unconsciously. In this study, patients and controls with normal sight awareness were asked to point at objects. When these objects were in the patient’s blind spots, they were still able to point at them! This was no pin-the-tail-on-the-donkey since many of them did as well as the controls. It was clear that their brain was unconsciously carrying out a complex set of tasks involving their sight and motor cortexes.

Similar tests can be done with non-lesioned people by masking stimuli. There is a famous video of a basketball game where people watching the video didn’t see (weren’t conscious of) the man in the gorilla suit walk through.

Study participants were asked to carry out complex counting and ball watching tasks that made their conscious attention completely focused on the game, so that they couldn’t see the person in the gorilla suit at all.

This gets into the other important part of consciousness: attention. It turns out that there is no conscious perception without attention. While your brain can carry out many unconscious tasks, it cannot be conscious of anything if it is not paying attention.

Given all the things that people can do unconsciously, including complex tasks, the benefits of consciousness fall into three basic classes:

Information maintenance

Novel combinations of operations

Intentional behavior

Consciousness appears to amplify information, making it stick around longer. George Sperling’s (1960) classic experiment had subjects remember letters flashed on a screen and other stimuli. He showed that without any external stimulation, tactile and audio memories last for a few seconds while visual memories only last milliseconds. Attention, however, can focus consciousness on details and retain them for much longer.

When people have to think strategically or develop new ways of solving a problem, conscious is also required. I know when I’ve been driving a route similar to my morning commute, I have often had my attention wander and found that I was on the way to work rather than where I was actually going. That is a case where consciousness is required to inhibit routine behavior.

A classic study of this phenomenon had subjects classify a colored string as green or red. Before being shown the string, they would be given a priming word: GREEN or RED, but sometimes the color and the word would be different. When the color and word were the same, people were able to match them more quickly. An interesting thing happened, however, when the experimenters had the colors be different from the word about 75% of the time. In that case, the subjects were faster when the word and string didn’t match, but only if they were able to have conscious awareness of the word. When the word was masked, as with the gorilla, they were not able to employ that trick. That showed that novel tactics require conscious attention.

Intentional behavior is the final area where consciousness is important. This means that, in order for someone to initiate a particular act spontaneously, they have to be conscious. Going back to the experiment with the blind-sighted people. They were able to point at an object in their blind spot, but they could not initiate that pointing spontaneously. In other words, if asked to point out a flower pot, they could do so. But they couldn’t point to something in their blind-spot and say “that’s a flower pot”. That suggests that unconscious processing only goes so far, requiring a stimulus-response, forced choice scenario to work.

Having circumscribed the boundaries of consciousness and unconsciousness based on global interfacing of neural abilities and selective attention, a neurological framework suggests itself: the Global Workspace.

A person’s brain is made of many different, specialized modules. Each module is responsible for a particular task and many of these modules are connected to other modules such as the visual and motor cortexes, so that certain tasks can be automated like driving a car or walking. Unconscious operations can take place in parallel so long as they don’t conflict with one another.

The conscious mind, on the other hand, is not modular. Rather, it is a central executive or global workspace. This global workspace does not exist in any one part of the brain but is distributed throughout it with workspace neurons in all the areas that consciousness can access. This explains why certain brain functions like blood pressure regulation are not under direct conscious awareness.

Workspace neurons are particularly concentrated in the prefronal cortex related to planning, decision making, and execution. A high concentration is also in the anterior cingulate, responsible for ethics and reward anticipation. These areas seem to be responsible for setting tasks for the rest of our conscious mind to follow as well as determining the cost-benefit and ethics of our actions.

One of the benefits of the global workspace is that it connects areas of the brain that aren’t normally connected: perception, motor control, long-term memory, evaluation, and attention.

Attention is the mechanism by which different modules are connected, mobilized for conscious use, and made available to the workspace. When attention isn’t on a particular module, those workspace neurons are not active and that area is functioning independently and unconsciously.

What this means is that you can’t point to the seat of consciousness (like the pineal gland) because it is (almost) everywhere in the brain and activates in areas where it is called by attention.

Since Bernard Baars introduced the theory in the 1980s and 90s, Stanislas Dehaene has extended it into a neuronal model with fast activation of neurons that broadcast data back and forth, creating a single, coherent impression of sensory information. This creates the sense of consciousness as having a singular, integrative quality.

Deep learning scientists have now (in 2021) taken the global workspace theory as a model for how to create conscious machines. Ever since it was introduced, deep learning has made amazing strides in developing powerful artificial intelligence modules that can carry out complex tasks, but in order to achieve the integrative quality of consciousness, a workspace model must bring together many different kinds of specialized artificial deep learning neural networks together.

Key features of the global workspace such as mobilization of specialized modules, long distance connections, and attention-based mobilization are critical in the design.

Let’s imagine we want to design an artificial brain called GWNN (Global Workspace Neural Network). We’ll call her Gwen.

Gwen’s brain features both feed-forward networks like classifiers to ID objects as well as generative networks that create images from inputs. Generative networks are required to give Gwen a mind’s eye, the ability to imagine and create. She can even have generative adversarial networks (in which a generative network creates images to fool a classifier) to create counter-factual information, such as simulations and alternative scenarios. This would give her the ability to imagine and dream. Autoencoders would reduce all the data flooding into her brain to manageable dimensions so that she can make quick decisions.

Suppose we have Gwen in the lab one day and we put her brain through a scenario where she is living in a house in the country. Her global workspace connects her vision, language, and movement networks together. As she walks through her living room, her movement network is active. She sees a cat. The cat image initiates conscious attention of her visual network. She forms a plan. Pet the cat. The vision network connects through the workspace to the movement to bend down and pet the cat. Suppose the cat turns out to be a picture of a cat. Now her language network activates in her consciousness instead and the word “cat” emerges.

This scenario includes both top-down and bottom-up behavior. Bottom-up behavior is when a stimulus engages her conscious attention, causing global workspace ignition, as when she sees the cat. Top-down behavior is when her attention is directed to a particular purpose so that her decisions are feeding into her visual network. Recall that she cannot be conscious of the entire scene before her, so she must select particular places to pay attention to consciously. The top-down direction tells the global workspace which parts of the visual input to select out. So we see these playing out with external stimuli igniting consciousness in the global workspace and the workspace feeding back into the stimulus networks recurrently to mobilize those and other modules into consciousness.

VanRullen and Kanai propose a version they call the Global Latent Workspace (GLW) for Gwen. The GLW is amodal meaning that it dynamically mobilizes different sensory perception modules that provide information about the same things like the cat’s appearance, meow, and the feel of it’s fur. These share a “latent” space, meaning a low dimensional space that captures the structure of the input or output of the modular systems. This latent space performs neural translation between the different modules.

For example, if Gwen now imagines the cat in her minds eye, her generative network produces an image of a cat which the latent workspace then translates (mathematically and computationally from one vector to another) into her language centers so the word “cat” appears in her mind as well. Both of these are copied into the workspace so they exist simultaneously in her consciousness as internal copies.

This means that her GLW is actually an unsupervised network translation space in that it is trained to translate sensory or motor outputs from one set of modules into the inputs for other sets. It glues everything together.

The GLW can connect a large variety of networks together. These may or may not have analogues in the human brain. Examples are object detection, object recognition, intuitive physics, world models, memory, motor control, natural language processing, text-to-speech, and so on.

Another feature of Gwen’s GLW is what is called cycle consistency. Cycle consistency is commonly used in language translation. The idea is that words in different languages should be close together semantically (in terms of their meaning) in different networks. This allows sentences to be translated from, say, French to Chinese and back and again without garbling the sentence. That is cycle consistency. The same is true for the GLW. If Gwen sees a cat in her mind’s eye and produces the word “cat” in her language center, she should be able to produce an image of a cat from the word “cat” as well. It goes both ways.

Gwen also has a way of directing her attention. Internally, this is accomplished with a query-key matching system, not unlike a database search. If you have a lot of information, the GLW needs a way to query for the information it wants and retrieve that information from the modular systems. For example, if querying the memory for an image of a cat, the query takes the form of the attention while the key retrieved (the image of the cat) completes the attention cycle. Given some task Gwen is trying to complete such as lifting a teacup, she must maintain her focus on the cup. The GLW discards visual and tactile information irrelevant to her queries for information about the cup.

Attention can be top-down in the sense that Gwen’s decision making process is looking for particular information and matching queries and keys. It can also be bottom-up. If somebody drops a teacup and smashes it behind her, Gwen may react to pay attention to what just happened. In this case, the loud noise contains a kind of “master key” that responds to all queries, altering the attention. At that point, the top-down attentional system resumes as she sees an engineer looking down at the broken remains of a teacup. What kinds of events would initiate that kind of reaction are an open question.

An interesting feature of Gwen’s GLW is that information is always broadcast throughout her GLW so that all modules always receive information all the time. There is no conscious control over which modules receive information. Thus, each module (auditory, visual, motor, memory, etc.) can always provide meaning to any sensory experience. This is what makes the consciousness “meaning making”. It correlates all of experience to form a whole. If modules aren’t relevant to a particular task, however, they will not be used. This means that Gwen has what neuroscientists have called a “penumbra of consciousness”, all the things we could be conscious of but aren’t because of the attentional system.

It is clear that such a system would be immensely valuable to Gwen. Without a GLW or similar workspace, her modules would either be operating independently or be connected in restrictive ways. She would only be capable of carrying out rote tasks. She would have no imagination or creativity and be unable to come up with novel ways of mobilizing her modules to solve problems. In addition, this system allows her to transfer learning from one module to another. For example, if she learns something by watching another person do it, she can imitate those motions by transfer learning from the visual to the motor networks. This takes time and effort, of course, but is more rewarding than trying to learn it separately or in some rote way. She can also engage in whole counter-factual reasoning scenarios using all of her modules and explore what if cases more easily.

One lingering question is whether this means that Gwen is conscious. It depends on your definition. Gwen is capable of reasoning, perception, and execution; therefore, she has what is called “access consciousness”. Whether she experiences subjectively what it is to be her is an open question. Philosophers have questioned whether digital beings can be conscious because digital information can always be reduced to a table-lookup system, which is manifestly unconscious. It seems confusing that an analog being would be any different though because their and our minds are made of atoms, molecules, and electrons that are distinct. Is Roger Penrose correct and we have to turn to quantum entanglement theory to explain subjective experience?

It may be that we will have to ask Gwen questions about her own experiences and, based on her answers, determine if she has consciousness or not. In the end, such tests do not determine the existence consciousness (just ask the nearest chatbot), and the zombie paradox suggests that we cannot know for sure of anyone’s but our own first person consciousness. Having another being, however, that behaves as if it were conscious would provide science another data point in determine what the boundaries of subjective experience is. At some point, we will have to decide also the ethical implications of creating such beings both in terms of what they can do and what we can do to them.

That will not stop anyone from creating them though, and the sooner we understand what we are dealing with the better.

Dehaene, Stanislas, and Lionel Naccache. “Towards a cognitive neuroscience of consciousness: basic evidence and a workspace framework.” Cognition 79.1–2 (2001): 1–37.

VanRullen, Rufin, and Ryota Kanai. “Deep learning and the Global Workspace Theory.” Trends in Neurosciences (2021).